Robotic Perception and Action

A.A. 2018/2019 – SLAM Project

Askhat Issakov, Jonathan Smyth, Simone Zamboni, Javier Macías

1. Introduction

Education has relied for the past thousands of year on static 2D representations of the world and of the arguments to teach. With new technologies today is possible to change this practice, enhancing the learning capabilities of the students and the quality of the lectures.

Following this idea we built a self-contained lesson in Unity of Simultaneous Location And Mapping (SLAM) using the ICP algorithm.

The experience is interactive and immersing for the student, giving possibility to learn a topic in a very practical and new way.

The lesson takes form as a game, where the main menu brings the player to different learning experiences. The experience is divided in three parts: a more theoretical learning part, a static play mode for an easier setup on other PCs and a full dynamic play mode.

2. Learning

The learning part is a theoretical lesson about the ICP with normals algorithm applied to SLAM. It is meant to be both suitable as a help for a professor to teach the topic and also suitable for students to have an idea about the algorithm.

The lesson is given mainly using two tools: written instructions on the top of the screen, in order to have a mathematical reference of what happens, and some simulated point clouds that show the ICP algorithm in action. The user can move around with a camera and can decide when to move on to the next step of the lesson.

3. Static Play

This is a learning experience thought for low level PCs and web built, that allows you to see the ICP algorithm in action but does not require the full technological stack described in section 5.

In this scene the user can go around with the main camera and see what is happening: a drone inside a mine is rotating in order to reconstruct it. The reconstructed mine is created step by step on the side of the mine. The user can also see the perspective of the drone and of the “virtual drone” in the reconstructed mine changing the camera view.

This modality is not interactive in the sense that the drone will rotate always in a certain direction. Also, the mine is always reconstructed in the same way, but the user can still go around and see what is happening in order to have a general idea of what mapping means using the ICP algorithm.

4. Dynamic Play

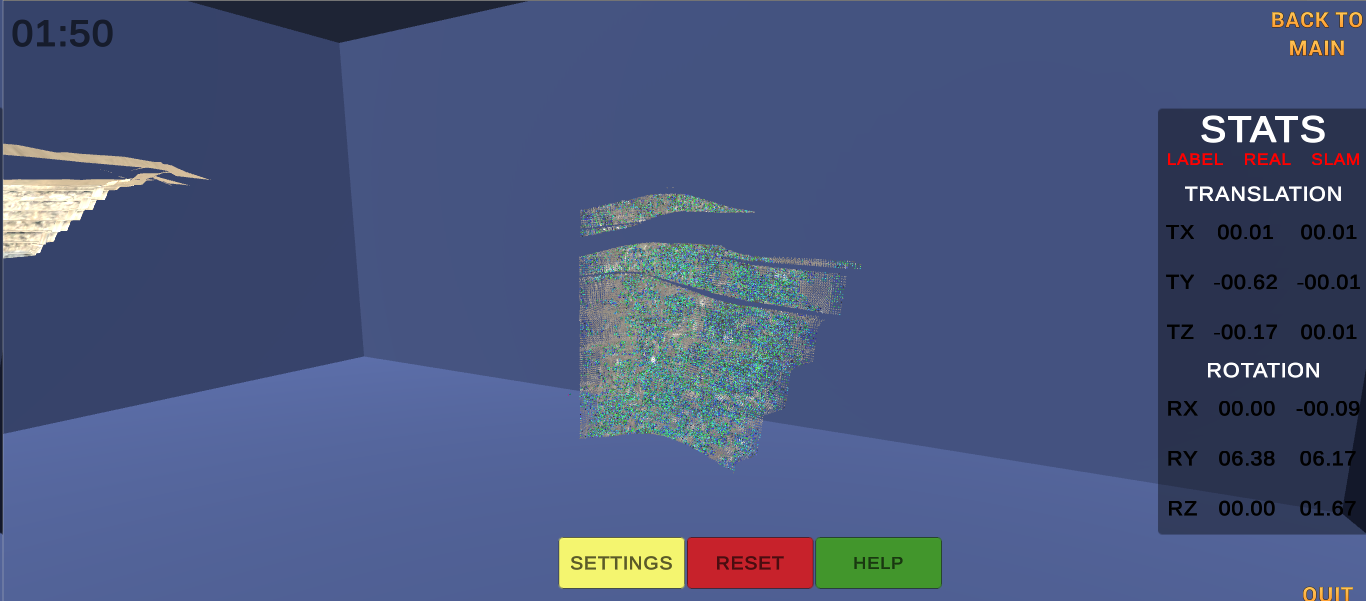

The idea of the dynamic play mode is to allow the user to simulate an actual ToF camera and perform SLAM live. This is done by simulating a drone to which the camera is attached. It can be controlled using the keyboard to fly over the mine while it is taking point clouds every few seconds. The image below shows the view of this camera mounted onto the drone in a arbitrary point in the space of the mine.

These points are sent over to the backend that is running on PCL 1.8 through ZeroMQ, where the ICP with normals algorithm, explained in the learn mode of the game. From here, both the local and global transformation matrix is computed and the input cloud transformed to be sent back to Unity where it will be displayed. The values of the location and rotation of the camera are also exported to be displayed into Unity.

Finally, the transformed point clouds are reconstructed and shown on Unity.

5. Technological aspects

The front end of this project uses Unity to build the interface, while the back end with the algorithms to perform SLAM are running on PCL 1.8. The communication between both of them is made through a TCP connection with ZeroMQ that polls and sends the information using a publisher-subscriber architecture. The back end is prepared to run on Unix machines, while Unity is usually running on Windows.

6. Conclusion

This project can show how new tools such Unity can help learning in a more interactive and effective way. In our experience working on this project we can assert that lessons using this new way of teaching needs to be carefully designed in the right way to be effective, but when they are designed in the right way can enhance greatly the ability of students to learn.